The $20,000 “Accident” That Turned Into a $1.8 Billion AI Company

(And Why Most People Will

Miss the Real Lesson)

AI Billionaire: By any traditional business standard… this story should not exist.

A man with $20,000… no team… no investors… and a laptop quietly builds a company now on pace to do $1.8 BILLION in revenue.

No boardroom. No funding rounds. No Silicon Valley hype machine.

Just speed… leverage… and something most people still don’t understand.

Let me explain.

The AI Story Everyone

Is

Talking About

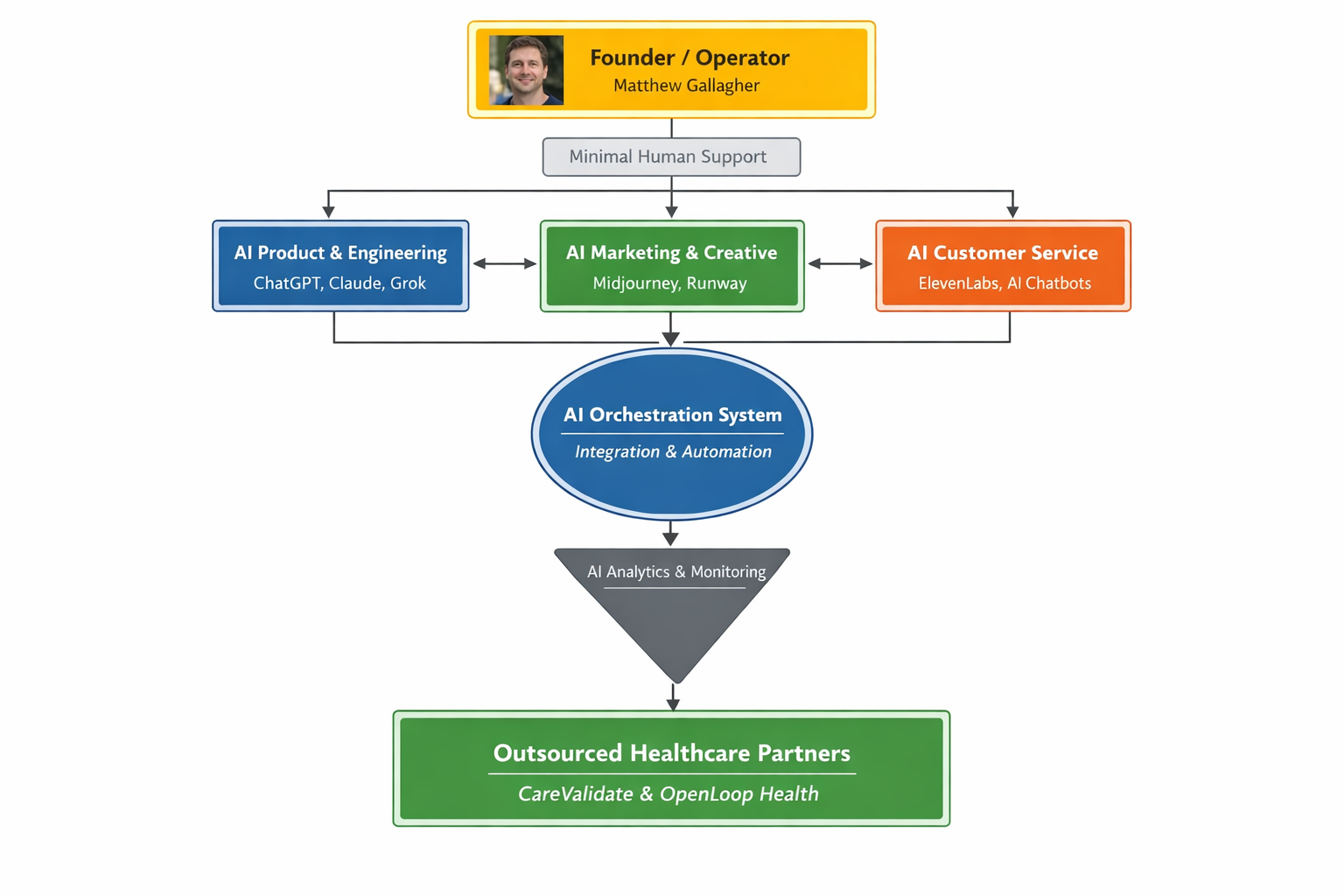

A founder named Matthew Gallagher launched a telehealth company called Medvi.

In just one year, it reportedly:

Generated $401 million in revenue Served 250,000 customers Produced over $65 million in profit And now?

They’re on track to hit $1.8 billion… with essentially two people running the entire operation.

Let that sink in.

Two people.

Not two hundred.

The Dangerous Lie You’re Being Sold

Now here’s where most people get it wrong…

They look at this story and say:

“AI is the secret.”

That’s lazy thinking.

Because if AI alone built billion-dollar companies…

You’d already be rich.

What Actually Happened (The Part Nobody Wants To Admit)

This wasn’t an “AI company.”

It was a perfect storm of 3 forces:

1. Timing (He Surfed a Tsunami)

Medvi sells GLP-1 weight loss drugs—one of the hottest, most desperate markets on earth right now. Sells for $179 US per month.

People aren’t “considering” buying…

They’re urgently searching for a solution.

And when demand explodes like that…

Even average marketing prints money.

2. Business Design (He Didn’t Build—He Assembled)

Here’s the real genius move:

- He didn’t create doctors.

- He didn’t build pharmacies.

- He didn’t handle logistics.

He outsourced everything complex…

And focused on ONE thing:

Customer acquisition.

In other words…

He built the front-end money machine and let other companies handle the messy backend.

3. AI Leverage (The Multiplier—Not The Magic)

AI didn’t create the opportunity.

It compressed the cost of execution.

- Marketing → AI

- Copywriting → AI

- Customer support → AI

- Coding → AI

What used to require 15+ employees… was done by one operator with tools.

That’s the real shift.

The Part They Don’t Put In The Headlines

Now here’s where it gets interesting…

And a little uncomfortable.

There are serious questions around how this growth happened:

- Allegations of fake doctor profiles used in ads

- AI-generated testimonials and images

- Regulatory scrutiny, including

- FDA warnings about misleading claims

Translation?

Speed without control can turn into a liability.

Fast.

If I were analyzing this…

I wouldn’t talk about AI.

I’d say this:

“This is a marketing story disguised as a tech story.”

Because the truth is:

- The list mattered more than the code

- The offer mattered more than the algorithm

- The market mattered more than the machine AI just made it faster.

What This Means For YOU (And Your Business)

You don’t need to build the next Medvi.

But you’d be crazy to ignore what it reveals.

Here’s the shift happening right now:

OLD WORLD:

- Build team

- Raise money

- Scale slowly

NEW WORLD:

- The Opportunity (If You’re Paying Attention)

- Find demand

- Build a funnel Use AI to execute instantly

Right now…

There are “mini-Medvi” opportunities everywhere:

- Anxiety relief (your lane 👀)

- Weight loss

- Sleep

- Focus

- Performance

The winners won’t be the smartest.

They’ll be the ones who:

Final Thought

- Pick a starving market

- Create a simple, irresistible offer

- Use AI systems to move faster than everyone else

This story isn’t about a billion-dollar company.

It’s about a new rulebook:

You no longer need resources to build something big… You need a system, leverage, speed, and the courage to execute.

Most people will read this…

Be impressed…

And do nothing.

A few will realize:

The barrier to entry just collapsed. Think high-end products, think system, think legal, think...

The company faces a complex web of legal and regulatory challenges

Based on Medvi’s unique operating structure—a lean, AI-driven front-end acting as a middleman for GLP-1 weight-loss medications—the company faces a complex web of legal and regulatory challenges.

By replacing human operators with off-the-shelf artificial intelligence and outsourcing clinical care, Medvi trades traditional overhead risks for severe compliance vulnerabilities.

Here is a breakdown of the primary legal challenges Medvi faces:

1. Data Privacy and HIPAA Compliance with AI

Because Medvi acts as the customer-facing interface for telehealth patients, it inherently collects Protected Health Information (PHI). Using third-party AI tools (like ChatGPT, Claude, and ElevenLabs) to handle customer service, intake, or operations is a massive legal minefield.

The Risk: Under the Health Insurance Portability and Accountability Act (HIPAA), any software processing PHI must be strictly secure, and the vendor must sign a Business Associate Agreement (BAA). If Medvi feeds patient inquiries or medical data into commercial AI models that use that data for training—or lack proper encryption and access controls—the company faces catastrophic federal fines and class-action lawsuits for data breaches.

2. AI Hallucinations and Consumer Protection

The company has already experienced AI hallucinations, such as its customer service bot fabricating non-existent products and incorrect drug prices.

FTC Violations: The Federal Trade Commission (FTC) strictly regulates "unfair or deceptive acts or practices." If an AI agent lies to consumers about pricing, availability, or refund policies, Medvi is legally bound by those statements and can be fined for deceptive business practices. Unlicensed Medical Advice: The most dangerous risk is an AI customer service agent accidentally answering a patient's medical question. If the ElevenLabs voice agent or text chatbot dispenses medical advice, Medvi could be sued for the unauthorized practice of medicine or face severe liability if a patient is harmed following the AI's guidance.

3. The Corporate Practice of Medicine (CPOM) and Outsourcing Liability

Medvi relies on partners like OpenLoop Health to handle the actual physicians and prescriptions. However, many states have strict "Corporate Practice of Medicine" laws, which dictate that non-physicians (like Matthew Gallagher) cannot own medical practices or unduly influence clinical decisions.

The Risk: While Medvi operates legally as a Management Services Organization (MSO) that merely provides the "marketing and tech" for licensed doctors, the line can easily blur. If regulators determine that Medvi's AI algorithms or checkout flows are pressuring doctors to prescribe GLP-1s simply to drive revenue, or bypassing proper doctor-patient establishment protocols, Medvi could be shut down for illegally practicing medicine.

4. FDA Advertising and Marketing Regulations

Marketing prescription drugs, particularly high-demand GLP-1s, requires strict adherence to Food and Drug Administration (FDA) guidelines. Drug advertisements must include a "fair balance" of benefits and risks (side effects).

The Risk: Medvi uses AI like Midjourney and Runway to rapidly generate ad creatives, and LLMs to write copy. AI models do not inherently understand FDA compliance. If an AI-generated ad exaggerates weight-loss claims, fails to list the severe side effects of GLP-1s, or promotes the drug for unapproved off-label uses, Medvi could face immediate injunctions and fines from the FDA. 5. Intellectual Property and Copyright Vulnerabilities

Because Medvi was built using AI for its code, website copy, and marketing imagery, the company essentially owns very little protectable Intellectual Property (IP).

The Risk: Under current US Copyright Office guidance, purely AI-generated content cannot be copyrighted. This means competitors could legally copy Medvi’s website text, ad imagery, and potentially even reverse-engineer its AI-written codebase without Medvi having strong legal recourse to stop them. The company's $1.8 billion valuation is largely based on its brand momentum rather than defensible, proprietary IP.

Important Warning

if such a thing exist ;-)

Medvi's founders are operating under the immense pressure of being the sole human backstops for automated systems in a highly regulated industry. Their primary legal battle will be proving to federal regulators that their AI layer is a secure, compliant administrative tool rather than a reckless, autonomous medical provider.

References

https://www.nytimes.com/2026/04/02/technology/ai-billion-dollar-company-medvi.html